A naive Bayes classifier is a simple probabilistic classifier based on applying Bayes' theorem with strong (naive) independenceassumptions. A more descriptive term for the underlying probability model would be "independent feature model". An overview of statistical classifiers is given in the article on Pattern recognition.

Contents

[hide] - 1 Introduction

- 2 Probabilistic model

- 3 Parameter estimation and event models

- 4 Sample correction

- 5 Constructing a classifier from the probability model

- 6 Discussion

- 7 Examples

- 7.1 Sex classification

- 7.1.1 Training

- 7.1.2 Testing

- 7.2 Document Classification

- 8 See also

- 9 References

- 10 External links

Introduction[edit]

In simple terms, a naive Bayes classifier assumes that the presence or absence of a particular feature is unrelated to the presence or absence of any other feature, given the class variable. For example, a fruit may be considered to be an apple if it is red, round, and about 3" in diameter. A naive Bayes classifier considers each of these features to contribute independently to the probability that this fruit is an apple, regardless of the presence or absence of the other features.

For some types of probability models, naive Bayes classifiers can be trained very efficiently in a supervised learning setting. In many practical applications, parameter estimation for naive Bayes models uses the method of maximum likelihood; in other words, one can work with the naive Bayes model without accepting Bayesian probability or using any Bayesian methods.

Despite their naive design and apparently oversimplified assumptions, naive Bayes classifiers have worked quite well in many complex real-world situations. In 2004, an analysis of the Bayesian classification problem showed that there are sound theoretical reasons for the apparently implausible efficacy of naive Bayes classifiers.[1] Still, a comprehensive comparison with other classification algorithms in 2006 showed that Bayes classification is outperformed by other approaches, such as boosted trees or random forests.[2]

An advantage of Naive Bayes is that it only requires a small amount of training data to estimate the parameters (means and variances of the variables) necessary for classification. Because independent variables are assumed, only the variances of the variables for each class need to be determined and not the entire covariance matrix.

Probabilistic model[edit]

Abstractly, the probability model for a classifier is a conditional model.

over a dependent class variable  with a small number of outcomes or classes, conditional on several feature variables

with a small number of outcomes or classes, conditional on several feature variables  through

through  . The problem is that if the number of features

. The problem is that if the number of features  is large or when a feature can take on a large number of values, then basing such a model on probability tables is infeasible. We therefore reformulate the model to make it more tractable.

is large or when a feature can take on a large number of values, then basing such a model on probability tables is infeasible. We therefore reformulate the model to make it more tractable.

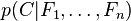

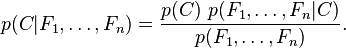

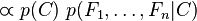

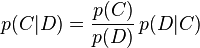

Using Bayes' theorem, this can be written

In plain English the above equation can be written as

In practice, there is interest only in the numerator of that fraction, because the denominator does not depend on  and the values of the features

and the values of the features  are given, so that the denominator is effectively constant. The numerator is equivalent to the joint probability model

are given, so that the denominator is effectively constant. The numerator is equivalent to the joint probability model

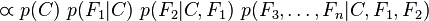

which can be rewritten as follows, using the chain rule for repeated applications of the definition of conditional probability:

-

-

-

-

-

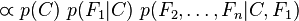

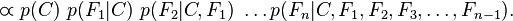

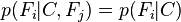

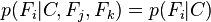

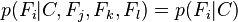

Now the "naive" conditional independence assumptions come into play: assume that each feature  is conditionally independent of every other feature

is conditionally independent of every other feature  for

for  given the category

given the category . This means that

. This means that

,

,  ,

,  , and so on,

, and so on,

for  , and so the joint model can be expressed as

, and so the joint model can be expressed as

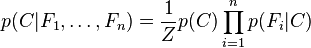

This means that under the above independence assumptions, the conditional distribution over the class variable  is:

is:

where  (the evidence) is a scaling factor dependent only on

(the evidence) is a scaling factor dependent only on  , that is, a constant if the values of the feature variables are known.

, that is, a constant if the values of the feature variables are known.

Models of this form are much more manageable, because they factor into a so-called class prior  and independent probability distributions

and independent probability distributions  . If there are

. If there are  classes and if a model for each

classes and if a model for each  can be expressed in terms of

can be expressed in terms of  parameters, then the corresponding naive Bayes model has (k − 1) + n r k parameters. In practice, often

parameters, then the corresponding naive Bayes model has (k − 1) + n r k parameters. In practice, often  (binary classification) and

(binary classification) and  (Bernoulli variables as features) are common, and so the total number of parameters of the naive Bayes model is

(Bernoulli variables as features) are common, and so the total number of parameters of the naive Bayes model is  , where

, where  is the number of binary features used for classification.

is the number of binary features used for classification.

Parameter estimation and event models[edit]

All model parameters (i.e., class priors and feature probability distributions) can be approximated with relative frequencies from the training set. These are maximum likelihood estimates of the probabilities. A class' prior may be calculated by assuming equiprobable classes (i.e., priors = 1 / (number of classes)), or by calculating an estimate for the class probability from the training set (i.e., (prior for a given class) = (number of samples in the class) / (total number of samples)). To estimate the parameters for a feature's distribution, one must assume a distribution or generate nonparametric models for the features from the training set.[3]

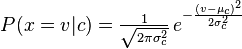

The assumptions on distributions of features are called the event model of the Naive Bayes classifier. For discrete features like the ones encountered in document classification (include spam filtering), multinomial and Bernoulli distributions are popular. These assumptions lead to two distinct models, which are often confused.[4][5] When dealing with continuous data, a typical assumption is that the continuous values associated with each class are distributed according to a Gaussian distribution.

For example, suppose the training data contain a continuous attribute,  . We first segment the data by the class, and then compute the mean and variance of

. We first segment the data by the class, and then compute the mean and variance of  in each class. Let

in each class. Let  be the mean of the values in

be the mean of the values in  associated with class c, and let

associated with class c, and let  be the variance of the values in

be the variance of the values in  associated with class c. Then, the probability density of some value given a class,

associated with class c. Then, the probability density of some value given a class,  , can be computed by plugging

, can be computed by plugging  into the equation for a Normal distribution parameterized by

into the equation for a Normal distribution parameterized by  and

and  . That is,

. That is,

Another common technique for handling continuous values is to use binning to discretize the feature values, to obtain a new set of Bernoulli-distributed features. In general, the distribution method is a better choice if there is a small amount of training data, or if the precise distribution of the data is known. The discretization method tends to do better if there is a large amount of training data because it will learn to fit the distribution of the data. Since naive Bayes is typically used when a large amount of data is available (as more computationally expensive models can generally achieve better accuracy), the discretization method is generally preferred over the distribution method.

Sample correction[edit]

If a given class and feature value never occur together in the training data, then the frequency-based probability estimate will be zero. This is problematic because it will wipe out all information in the other probabilities when they are multiplied. Therefore, it is often desirable to incorporate a small-sample correction, called pseudocount, in all probability estimates such that no probability is ever set to be exactly zero.

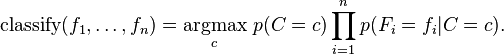

Constructing a classifier from the probability model[edit]

The discussion so far has derived the independent feature model, that is, the naive Bayes probability model. The naive Bayes classifier combines this model with a decision rule. One common rule is to pick the hypothesis that is most probable; this is known as the maximum a posteriori or MAP decision rule. The corresponding classifier, a Bayes classifier, is the function  defined as follows:

defined as follows:

Discussion[edit]

Despite the fact that the far-reaching independence assumptions are often inaccurate, the naive Bayes classifier has several properties that make it surprisingly useful in practice. In particular, the decoupling of the class conditional feature distributions means that each distribution can be independently estimated as a one dimensional distribution. This helps alleviate problems stemming from the curse of dimensionality, such as the need for data sets that scale exponentially with the number of features.[6] While naive Bayes often fails to produce a good estimate for the correct class probabilities, this may not be a requirement for many applications. For example, the naive Bayes classifier will make the correct MAP decision rule classification so long as the correct class is more probable than any other class. This is true regardless of whether the probability estimate is slightly, or even grossly inaccurate. In this manner, the overall classifier can be robust enough to ignore serious deficiencies in its underlying naive probability model. Other reasons for the observed success of the naive Bayes classifier are discussed in the literature cited below.

Examples[edit]

Sex classification[edit]

Problem: classify whether a given person is a male or a female based on the measured features. The features include height, weight, and foot size.

Training[edit]

Example training set below.

| sex | height (feet) | weight (lbs) | foot size(inches) |

| male | 6 | 180 | 12 |

| male | 5.92 (5'11") | 190 | 11 |

| male | 5.58 (5'7") | 170 | 12 |

| male | 5.92 (5'11") | 165 | 10 |

| female | 5 | 100 | 6 |

| female | 5.5 (5'6") | 150 | 8 |

| female | 5.42 (5'5") | 130 | 7 |

| female | 5.75 (5'9") | 150 | 9 |

The classifier created from the training set using a Gaussian distribution assumption would be (given variances are sample variances):

| sex | mean (height) | variance (height) | mean (weight) | variance (weight) | mean (foot size) | variance (foot size) |

| male | 5.855 | 3.5033e-02 | 176.25 | 1.2292e+02 | 11.25 | 9.1667e-01 |

| female | 5.4175 | 9.7225e-02 | 132.5 | 5.5833e+02 | 7.5 | 1.6667e+00 |

Let's say we have equiprobable classes so P(male)= P(female) = 0.5. This prior probability distribution might be based on our knowledge of frequencies in the larger population, or on frequency in the training set.

Testing[edit]

Below is a sample to be classified as a male or female.

| sex | height (feet) | weight (lbs) | foot size(inches) |

| sample | 6 | 130 | 8 |

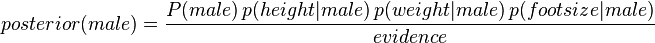

We wish to determine which posterior is greater, male or female. For the classification as male the posterior is given by

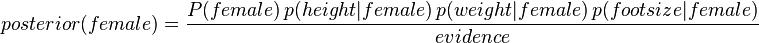

For the classification as female the posterior is given by

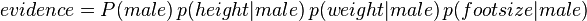

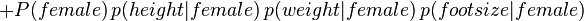

The evidence (also termed normalizing constant) may be calculated:

However, given the sample the evidence is a constant and thus scales both posteriors equally. It therefore does not affect classification and can be ignored. We now determine the probability distribution for the sex of the sample.

,

,

where  and

and  are the parameters of normal distribution which have been previously determined from the training set. Note that a value greater than 1 is OK here – it is a probability density rather than a probability, because height is a continuous variable.

are the parameters of normal distribution which have been previously determined from the training set. Note that a value greater than 1 is OK here – it is a probability density rather than a probability, because height is a continuous variable.

Since posterior numerator is greater in the female case, we predict the sample is female.

Document Classification[edit]

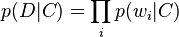

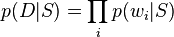

Here is a worked example of naive Bayesian classification to the document classification problem. Consider the problem of classifying documents by their content, for example into spamand non-spam e-mails. Imagine that documents are drawn from a number of classes of documents which can be modelled as sets of words where the (independent) probability that the i-th word of a given document occurs in a document from class C can be written as

(For this treatment, we simplify things further by assuming that words are randomly distributed in the document - that is, words are not dependent on the length of the document, position within the document with relation to other words, or other document-context.)

Then the probability that a given document D contains all of the words  , given a class C, is

, given a class C, is

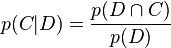

The question that we desire to answer is: "what is the probability that a given document D belongs to a given class C?" In other words, what is  ?

?

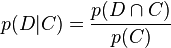

Now by definition

and

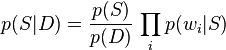

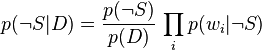

Bayes' theorem manipulates these into a statement of probability in terms of likelihood.

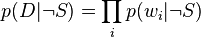

Assume for the moment that there are only two mutually exclusive classes, S and ¬S (e.g. spam and not spam), such that every element (email) is in either one or the other;

and

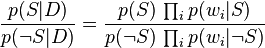

Using the Bayesian result above, we can write:

Dividing one by the other gives:

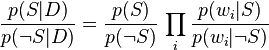

Which can be re-factored as:

Thus, the probability ratio p(S | D) / p(¬S | D) can be expressed in terms of a series of likelihood ratios. The actual probability p(S | D) can be easily computed from log (p(S | D) / p(¬S |D)) based on the observation that p(S | D) + p(¬S | D) = 1.

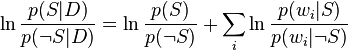

Taking the logarithm of all these ratios, we have:

(This technique of "log-likelihood ratios" is a common technique in statistics. In the case of two mutually exclusive alternatives (such as this example), the conversion of a log-likelihood ratio to a probability takes the form of a sigmoid curve: see logit for details.)

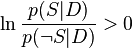

Finally, the document can be classified as follows. It is spam if  (i.e.,

(i.e.,  ), otherwise it is not spam.

), otherwise it is not spam.

References[edit]

- Jump up^ Zhang, Harry. "The Optimality of Naive Bayes". FLAIRS2004 conference.

- Jump up^ Caruana, R.; Niculescu-Mizil, A. (2006). "An empirical comparison of supervised learning algorithms". Proceedings of the 23rd international conference on Machine learning. CiteSeerX:10.1.1.122.5901.

- Jump up^ George H. John and Pat Langley (1995). Estimating Continuous Distributions in Bayesian Classifiers. Proceedings of the Eleventh Conference on Uncertainty in Artificial Intelligence. pp. 338-345. Morgan Kaufmann, San Mateo.

- Jump up^ McCallum, Andrew, and Kamal Nigam. "A comparison of event models for Naive Bayes text classification." AAAI-98 workshop on learning for text categorization. Vol. 752. 1998.

- Jump up^ Metsis, Vangelis, Ion Androutsopoulos, and Georgios Paliouras. "Spam filtering with Naive Bayes—which Naive Bayes?" Third conference on email and anti-spam (CEAS). Vol. 17. 2006.

- Jump up^ An introductory tutorial to classifiers (introducing the basic terms, with numeric example)

Further reading[edit]

- Domingos, Pedro & Michael Pazzani (1997) "On the optimality of the simple Bayesian classifier under zero-one loss". Machine Learning, 29:103–137. (also online at CiteSeer:[1])

- Rish, Irina. (2001). "An empirical study of the naive Bayes classifier". IJCAI 2001 Workshop on Empirical Methods in Artificial Intelligence. (available online: PDF, PostScript)

- Hand, DJ, & Yu, K. (2001). "Idiot's Bayes - not so stupid after all?" International Statistical Review. Vol 69 part 3, pages 385-399. ISSN 0306-7734.

- Webb, G. I., J. Boughton, and Z. Wang (2005). Not So Naive Bayes: Aggregating One-Dependence Estimators. Machine Learning 58(1). Netherlands: Springer, pages 5–24.

- Mozina M, Demsar J, Kattan M, & Zupan B. (2004). "Nomograms for Visualization of Naive Bayesian Classifier". In Proc. of PKDD-2004, pages 337-348. (available online: PDF)

- Maron, M. E. (1961). "Automatic Indexing: An Experimental Inquiry." Journal of the ACM (JACM) 8(3):404–417. (available online: PDF)

- Minsky, M. (1961). "Steps toward Artificial Intelligence." Proceedings of the IRE 49(1):8-30.

- McCallum, A. and Nigam K. "A Comparison of Event Models for Naive Bayes Text Classification". In AAAI/ICML-98 Workshop on Learning for Text Categorization, pp. 41–48. Technical Report WS-98-05. AAAI Press. 1998. (available online: PDF)

- Rennie J, Shih L, Teevan J, and Karger D. Tackling The Poor Assumptions of Naive Bayes Classifiers. In Proceedings of the Twentieth International Conference on Machine Learning (ICML). 2003. (available online: PDF)

External links[edit]

- Software